Cameras: It’s Complicated

Latest posts by Dorota Czaplejewicz (see all)

- Repo stats - April 7, 2023

- Cheers, to the Future of Libcamera - November 4, 2022

- Cameras: It’s Complicated - May 9, 2022

Two years before I started working on cameras for the Librem 5, I thought the work would go something like this: first, write a driver, then maybe calibrate the colors, connect to the camera support infrastructure, and bam! PureOS users on the phone would then do teleconferences with Jitsi or snap selfies with Cheese, just like they can on laptops.

The title of this blog post already gives away what happened. I wrote a driver and connected it up, yet teleconferences remain in the future. In fact, our version of Megapixels is the only app that can shoot photos on the Librem 5 today, and that’s because it received special attention. How come? Aren’t cameras pretty simple in the end? Light in, pixels out. That’s pretty plug-and-play!

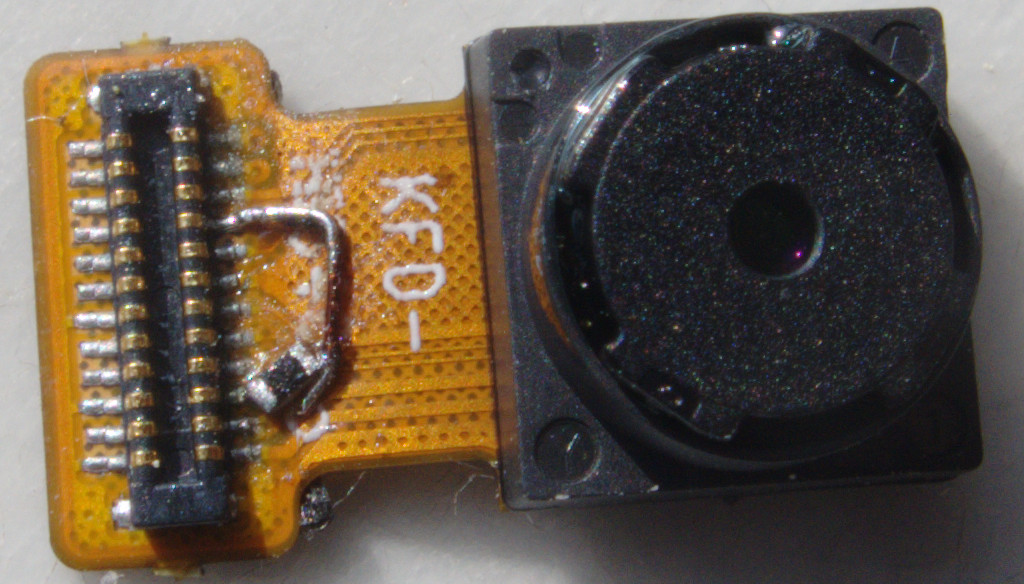

Well actually, no. Cameras can be made of a multitude of components, and light sensors are just one piece of the puzzle. There are cameras that output raw light values, but the whole module also includes mechanical parts like a flash light, focus motor, or one for zoom, or maybe active image stabilization actuators. There are also Image Signals Processors (ISPs) which can do a multitude of tasks, like debayering, denoising, lens correction, color balance, color conversions, and more.

But that’s not surprising. What’s unusual is that despite the diversity of camera modules, laptop cameras generally Just Work. They are so well supported under Linux because they are connected using a protocol called… USB. Yes, laptop cameras are connected over USB! And USB cameras universally support the UVC (USB video class) protocol, which requires the webcam to provide a video stream ready to consume by applications. It’s an amazing achievement to have such standardization.

However, USB is not the camera protocol typically used on phones. It’s not even universal on laptops! UVC is really good for basic video recording, because it provides just what typical applications need: automatic adjustment of brightness, focus, and color, and it also provides images that are already demosaiced. Those are difficult tasks, and completely out of scope for applications like the web browser or a chat client. They should be doing what they do best, rather than switch focus to image processing, and UVC helps them a lot by pushing those responsibilities to the camera hardware.

If you’re a phone maker, you might not be satisfied with UVC taking over those tasks. You might want to have detailed access to the ISP part of your camera module. Or you might want to expose the raw sensor instead, just like the Librem 5 does. Then you can’t rely on UVC any longer.

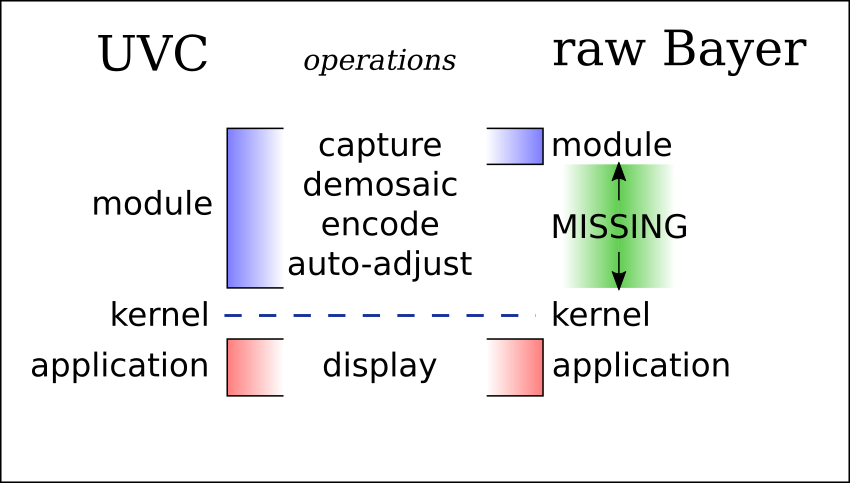

Let’s take a look again at the processing steps that are really needed between when the camera receives light and an application presents a video frame. On the left, there’s the UVC baseline, on the right a raw Bayer sensor. Oh no! There’s a huge gap in functionality when using a Bayer sensor! Our application can’t capture frames until the gap is filled. But what should fill it? We already established that the application should not be responsible for processing. Maybe we can squeeze it back in the module? Sadly, it’s not possible to add new functionality to existing hardware. What’s left is the kernel… perish the thought! Adding image processing code to the kernel is a terrible idea: any bug could cause the crash of the entire system. Let the kernel remain a path to access hardware, and that only.

Oh no! There’s a huge gap in functionality when using a Bayer sensor! Our application can’t capture frames until the gap is filled. But what should fill it? We already established that the application should not be responsible for processing. Maybe we can squeeze it back in the module? Sadly, it’s not possible to add new functionality to existing hardware. What’s left is the kernel… perish the thought! Adding image processing code to the kernel is a terrible idea: any bug could cause the crash of the entire system. Let the kernel remain a path to access hardware, and that only.

There are no more options. Are we in a bind?

Wait a moment. If you zoom in on any typical application, you will see that… it’s actually not a single thing. It’s made of multiple libraries combined together, each responsible for a different task. For example, your email client doesn’t decode images, libpng does. And your movie player does not compile shaders needed to display the window, it uses an OpenGL library.

Where’s the library that provides highly processed video streams like those coming from UVC modules? It’s called libcamera.

Libcamera is not yet old and established like OpenGL. It’s relatively young, just like running GNU/Linux on devices with custom cameras is, so it’s currently not widely used. But once it becomes widespread enough, it could solve our problem of having plug-and-play camera support, regardless of the camera type. In fact, we already wrote about this in our photo processing tutorial.

That’s where the Librem 5 team has been directing their camera efforts now. We still have a way to go: selecting capture resolutions, forwarding controls, debayering, and auto-adjustment algorithms. We’re progressing slowly, but steadily, helping the library overcome growing pains and get right its internal structure as well as external API. And once we get the functionality we need working, it will be available to others using libcamera: applications and other cameras will have them out of the box.

Hopefully, by that time, libcamera will reach critical mass, and all applications would be able to use it without fuss, including teleconferencing apps. Then we’ll reach our goal. Meanwhile, you can help everyone get there by contributing. If you’re a student, libcamera is also regularly a mentor at Google Summer of Code.

See you on the other side (of a teleconference)!

Purism Products and Availability Chart

| Model | Status | Lead Time | ||

|---|---|---|---|---|

| Librem Key (Made in USA) | In Stock ($59+) | 10 business days | |

| Liberty Phone (Made in USA Electronics) | Available on backorder ($1,999+) 4GB/128GB | n/a | |

| Librem 5 | In Stock ($799+) 3GB/32GB | 10 business days | |

| Librem 11 | Out of stock | New Version in Development | |

| Librem 14 | Out of stock | New Version in Development | |

| Librem Mini | Out of stock | New Version in Development | |

| Librem Server | In Stock ($2,999+) | 45 business days | |

| Librem PQC Encryptor | Available Now, contact sales@puri.sm | 90 business days | |

| Librem PQC Comms Server | Available Now, contact sales@puri.sm | 90 business days |

Recent Posts

Related Content

- Wired Confirmed iPhone’s Worst-Kept Secret: Closed Systems Fail at Scale

- Privacy Under Siege

- Sim Swap Attacks Surging

- PureOS Crimson Development Report: December 2025

- PureOS Crimson Development Report: November 2025